How much improvement in performance can we expect from the Load-balancer in Futurama Web applications for various scenarios and user load.

Introduction

For Futurama 18.02 we have introduced a new load-balancing system in Futurama Webservice, intended to spread requests over multiple instances of the Futurama calculation engine allowing higher performance. From Futurama 18.08 we offer the same functionality for Futurama Web. In this document we will explain the concept behind this system, and we’ll show the benefit for various scenarios in Futurama Web.

Concept

The load-balancer functions by instantiating multiple Futurama calculation engines within the IIS application, keeping its data and instruction flow separated. Each of these engines runs in its own processor-thread, allowing multiple requests to be handled in parallel. Every user is assigned to one of these engines, where their data will be held in memory. Any consecutive requests for the same user will be directed to this same engine until the session is expired.

Performance gains

When a user of a Futurama Web application sends a request, a part of the work is performed by Microsoft IIS, which is already handled in a multithreaded situation. The rest of the work is performed by Futurama’s calculation engine, which only allows a single thread to run simultaneously. The load-balancer will allow this part of the work to become multithreaded, which will benefit any requests that rely heavily on Futurama calculations. To show this distinction, we generated some timing-results with 3 different scenarios on a test server with 4 processors. We vary the number of load-balancing engines to see the benefits for different user loads.

Performance metrics

· Response time: In a user-centered perspective, the performance of an HTTP request can be viewed as the time required to complete a user’s request.

· Throughput: When viewing from a server-perspective, the number of requests served per second can be seen as another performance metric.

The presented numbers are relative to the specific scenario run on Futurama Web without load-balancing for a specific user load. So if the response time has a value of 75%, then the gain in response time can be considered as 25%.

Timing results

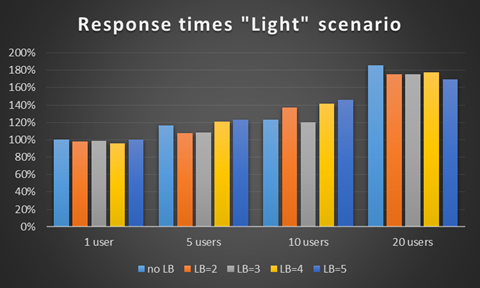

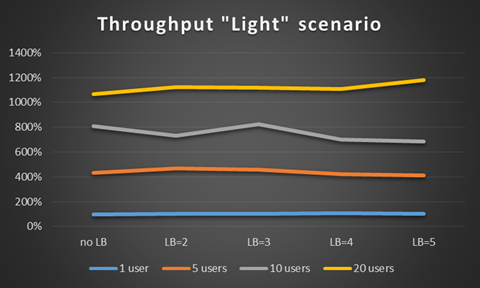

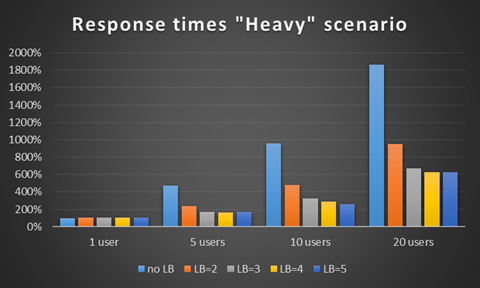

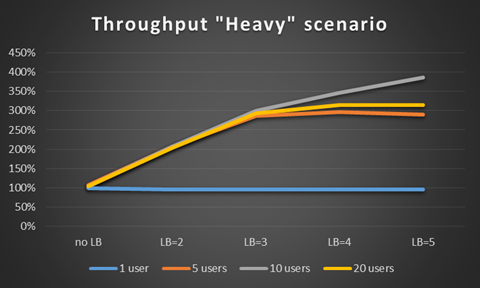

In the following results, the response times and server throughput can compared, when varying the user-load and changing the number of load-balancing engines (shortened in the charts as “LB”).

We normalized all results to a value relative to the scenario without load-balancing for a single continuous user-load.

Light

This scenario consisting of requests for which Futurama calculations are responsible of only around 5% of the response time.

In this light scenario, little improvement can be seen in the application of more load-balancing engines. However, it is clear that the application scales nicely, allowing higher user-loads to increase the server’s throughput, while keeping the response times from ballooning.

Heavy

This scenario consists of requests for which Futurama calculations are responsible for about 95% of the response time.

In these results, it can be seen that enabling the load-balancer makes a huge difference for both the response-times as for the server throughput. At a certain point the server’s available processor-time becomes the bottleneck for further improvements.

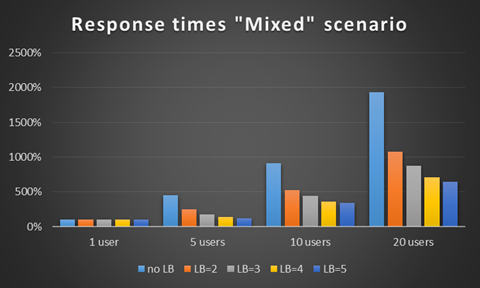

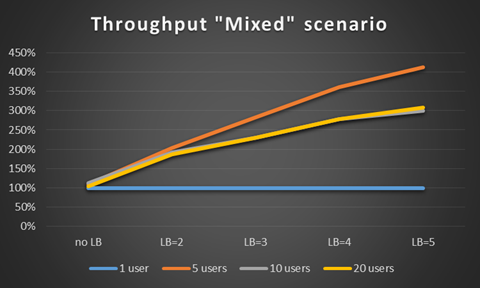

Mixed

This scenario contains mixed requests, consisting of 66% light, 17% heavy and 17% delaying requests (delaying request: a request that has to wait 2 seconds for a remote webservice call which use very little processing power).

In this mixed scenario, the improvement shows a similar trend as the Heavy scenario, allowing response times to be considerably reduced during heavy user-load.

The higher throughput of the 5-user load compared to the even higher userloads is because of the fixed delay step in the scenario. This causes the entire scenario to have a lower limit of how fast it can execute, and other users have to wait.

Disadvantages

So if the load-balancer contains so much performance benefits, why would you disable this system? Below are some reasons to keep the load-balancer disabled:

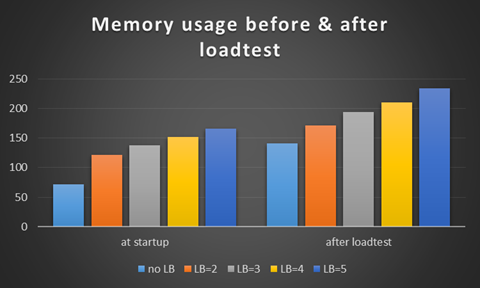

Memory usage

Because multiple independent calculation engines have to be loaded into memory, the memory footprint of the bare application will be higher. But the increase of memory usage for every consecutive user should be similar as for an application without load-balancing.

To illustrate this, we have gauged the memory usage before and after running a loadtest for various settings of the load-balancer:

HTML5 only for DateBox and Websliders

Because of technical reasons, the load-balancer cannot be used when the WebSlider or Datebox control is present in an XHTML1.0 compliant Futurama Web application. The solution is the usage of the XSLT file for HTML5, as shown in this page.

Multiple log files

Because every Futurama Calculation Engine operates independently, we currently are limited in our methodology for writing log files. This means that every instantiated load-balancing engine will write its own log files, recognizable by the suffix “(instance X)” in the log filename, where “X” indicates the unique identifier for the load-balancing engine.

Optimized for production environments

The Load-Balancer is intended as an optimization feature for production-ready applications. This means we do not support the usage of the Futurama Insight or the ShowNotifier feature with the load-balancer enabled. Should the application need to be debugged in the production environment, the ease of configuration will easily allow the disabling of the Load-balancer, so insight can be used.

Conclusions

With the presented situations using varying number of load-balancing engines and a varying user load, we can now make a number of conclusions:

- The usage of the load-balancer will improve throughput and response-times most during high user load for applications that heavily depend on database operations, web services or complicated algorithms.

- The benefits of load-balancing do not always scale linearly. On heavily loaded applications with complex Futurama calculations, setting the load-balancer to 2 engines will drastically improve the response time, but increasing the number of engines will not always yield the same improvement.

- The number of load-balancing engines does not have to be directly related to the number of processors in the server. Because the load-balancer operates within a single process using multiple threads, Windows is capable of spreading this multithreaded load over multiple processors as it sees fit. This can be seen whenever a 5th load-balancing engine is engaged, while only 4 processors exist within the server. The extra work is spread among the 4 processors.

Related Topics